Soup is dead, long live the soup

A long time ago, in a remote German-speaking land, a group of people established their own platform, a distant relative of Tumblr, allowing users to post any content (images, videos, quotes, text, audio, just links or even ratings of anything), completely free and ad-free.

I joined, unfortunately I don’t remember who helped me discover it, in 2009. I think the first person I followed was a user of Blip (site later bought by Wykop and killed). This one, in turn, probably got there thanks to a user I remember from the good old times of MyBlogLog (later bought by Yahoo and killed).

Over these eleven years, it turned out that Soup (formerly under soup.io) is not only – or actually not at all – a “picture blog”, and not simply a modified tumblr clone, but a growing yet tightening community of expression. Yes, the latest memes and comments of the surrounding reality were always there, somehow days or months earlier than I saw them on any other site. But there were also the tiny little lights, colors and cats, there were beautiful photos that reminded us of what a fascinating world we live in, there were – most of all – people who were behind the selection of their content.

So when the website suddenly announced that it would cease to exist in 10 days, which also meant that from tomorrow everyone would start trying to save their content, causing – once again in the tough history of the site – an overload and a lot of 503 errors … I had to do the same. Soup theoretically provided the ability to export everything under one link, “export RSS” was supposed to contain the full history – but 11 years of content was too much for it and it always ended up with an error after a long wait.

This is how downsouper came to short life, written in a hurry, because I knew I had to start yesterday. This python script edoes what a human would do – goes to a given profile, views all posts, tries to understand whether it is uploaded by the profile owner or reposted from someone else, whether this post is a reaction, if yes then to what post, whether someone reposted it and who… and when it comes to the last one on a given page, clicks the “more” link. Actually, it takes its URL and starts over, ignoring any posts it has already seen.

In other words, I approached the problem very differently than most users – not pictures, but content, history, being able to trace when and where it was all most important to me. The script could not download the posted pictures, but it turned out to be unnecessary, because there are proven tools such as wget (and bash and jq), which “fed” with the data from my export, pulled 19 GB of my soup in 15 minutes.

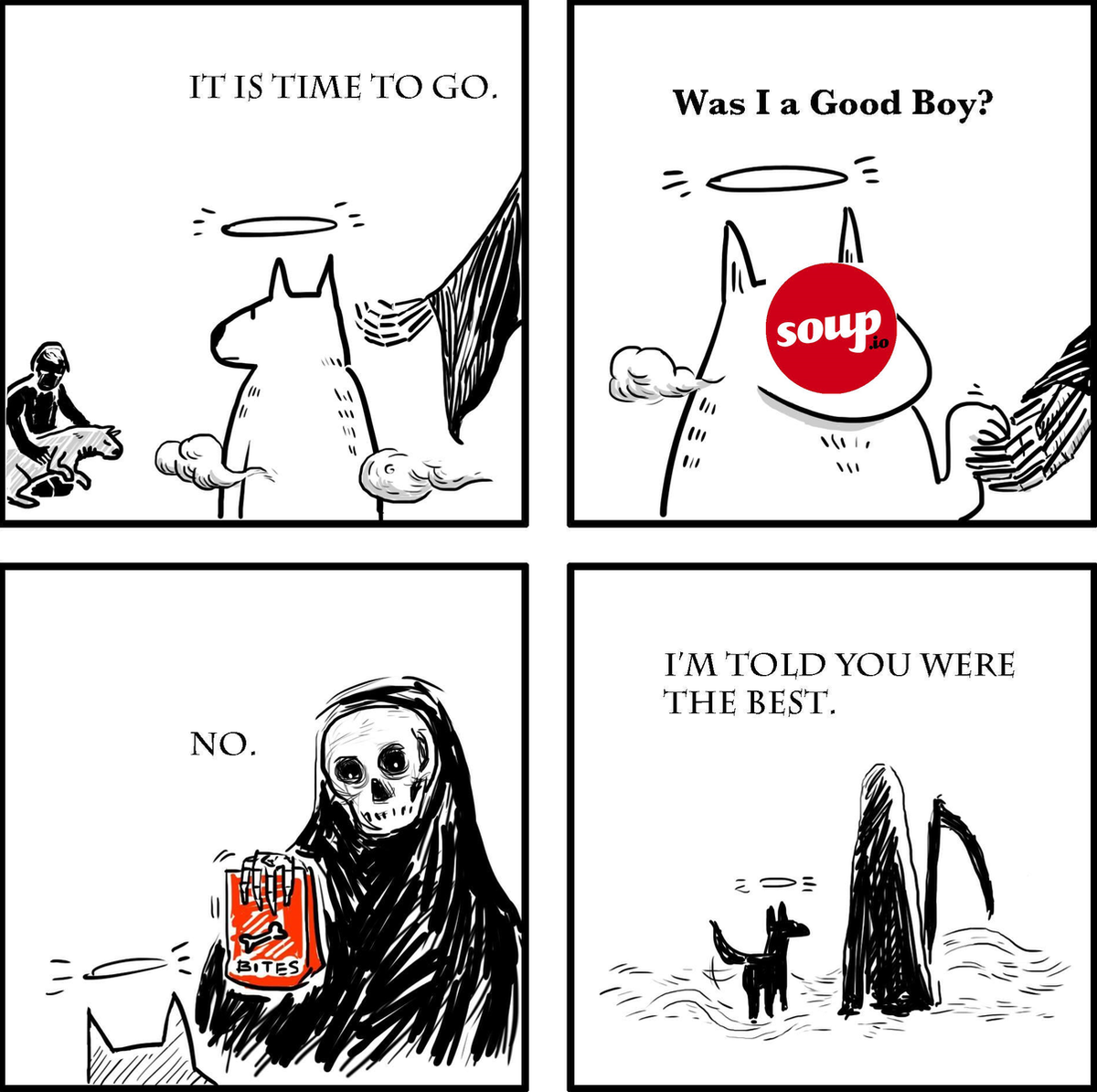

But there’s something that can’t be migrated, exported, or backed up – it’s the community. When Soup went down for three months (!), and then it turned out that it lost most of the data for the entire year 2016 (!!!), many people left. Now it was certain that the same thing is going to happen again. Like when Blip was taken down. Some will go to Tumblr, some to Twitter, some to some tiny portals, and you will never again – like after parting ways after graduating from last year of school – never all meet again in one place. You will not follow the same streams of thoughts, you will not be answered by the same almost-strangers.

I will miss that the most.

Nevertheless, I gave a completely new and unknown portal a chance. I wrote asking if they could handle my custom file format, or should I reformat it to export RSS. They replied “ah, so you are ikari, we already serve it” :).

It’s cool reading the whole history like this.

I’m glad you gave the unknown portal a chance 🙂

Thanks, Jeevan.

It’s a new home. I like it, and it’s important in my everyday breaks when I want to give my brain a little rest.